Image credits: Core-design.com and Wallusy from Pixabay. Edited.

If you’ve watched my latest YouTube video and/or read its companion blog post, you might be curious about the results of the survey about Lara Croft I conducted as part of my excruciatingly long research into Lara’s real measurements, their realism (or lack thereof), and the perception that Tomb Raider fans have of her appearance.

You might even be one of the respondents, and maybe you had forgotten all about it because it’s been months since I shared my survey with the fanbase, but now that the video and the huge blogorrhea that accompanied it are out, it’s time for the survey to follow suit. Be warned: this is a long post.

Demographics

I had a total of 208 respondents. That’s frankly a lot more than I was expecting, but the Tomb Raider fanbase is far larger than that; /r/TombRaider alone counts over 45,000 subscribers, so my sample is hardly representative. Still, ya gotta work with what ya got.

Sorry if some of the charts are a bit messy; I wanted to embed the interactive charts straight from Google Sheets, but it turns out that’s harder than figuring out bra sizes, so I had to be content with regular pictures and some of them get messy with all those percentages.

Sex and orientation

The vast majority of the respondents were male. I really wanted more female respondents than what I got, but I couldn’t run the survey forever. Respondents in the intersex and other/doesn’t say groups were too few to draw any conclusions about their respective groups, so I had no choice but to exclude them from analyses that were meant to find correlations with sex. I did however consider them in all other analyses.

If you don’t aggregate groups, straight people constitute the majority of the respondents, but for a good while at the beginning, the vast majority were gay or lesbian. I don’t know about the fanbase of other games, but the Tomb Raider franchise is very diversified, and it doesn’t take a survey to notice that.

Someone on twitter asked me why I wanted to know about the sex and/or orientation of the respondents; besides pure curiosity, the reason was that I wanted to detect bias. As an extreme example, if all straight males had said Lara was not oversexualised and all straight females had said she was, well, I could probably have guessed that something funny was going on there.

However, I’m not sure I asked all the right questions. I only asked about the respondents’ biological sex and not about their gender identity. So, for example, if there was any MtF transexual person who felt female and was straight with respect to her identity (i.e., attracted to males only), she would still have answered “male, straight,” which isn’t an accurate description of the situation. I am assuming cases like that are the exception and not the norm, but regardless, my survey doesn’t capture them, and I realised this only when it was too late. Something to keep in mind for my next survey, eh?

Age

I asked about the respondents’ age mostly because I was curious to know which age groups were most into what Tomb Raider games. In all analyses that were meant to draw conclusions about age, some groups were aggregated because they were too small to be representative. So, 0-17 was aggregated to 18-24, and all groups from 45-54 onwards have been aggregated together. They haven’t been excluded from any analyses.

Series enjoyment

This section is all about who played what, and how much they liked what they played. These responses were also used to detect bias, for example to see if people who liked the Survivor trilogy the most were more likely to say that Classic/LAU Lara (henceforth CLL) was oversexualised.

Classic games (TR1-TRAOD)

Most of the respondents (71.6%) liked the classics “a lot” or “quite a bit”. Very few didn’t like them, but there is a non-negligible chunk of them that haven’t played any. Isn’t it about time you guys fixed that? 😛

Interestingly, more females than males have enjoyed the classics a lot or quite a bit, and fewer females haven’t played any. There was exactly one female respondent who didn’t like the classics at all. (We all know it’s you, Stella…)

The percentage of players who enjoyed the classics a lot or quite a bit grows visibly with age, while the percentage of those who didn’t play them at all goes down with age. This is an utterly unsurprising result, given that the most recent classic Tomb Raider game came out some 18 years ago. (And I still haven’t played it, goddammit!)

LAU trilogy (Legend, Anniversary, Underworld)

While fewer people liked LAU a lot, more liked them quite a bit; overall, 69.3% of the respondents picked either option, a value that is very comparable to the classics’ 71.6%. Very few people actively disliked LAU, and slightly fewer people didn’t play it compared to people who didn’t play the classics—which I guess is reasonable seeing as how LAU is more recent.

While slightly more males than females enjoyed LAU a lot, far more females than males enjoyed it quite a bit; overall, once again, females liked LAU either a lot or quite a bit more than males did, and quite a bit more males didn’t play it at all.

The percentage of people who liked LAU a lot or quite a bit goes down with age at first, only to jump back very high for the 45+ cohort. They’re still the majority for all age cohorts, and once more, only few younger players disliked it.

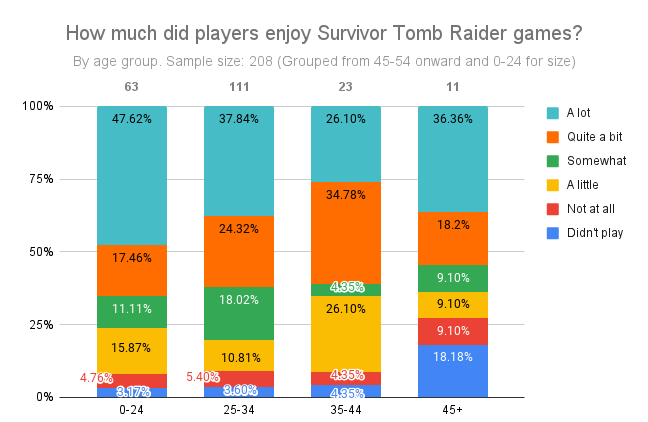

Survivor trilogy (Tomb Raider, Rise of the Tomb Raider, Shadow of the Tomb Raider)

The Survivor trilogy was liked “a lot” by the least people; it was liked “quite a bit” by more people than liked the classics the same way, but by fewer people who liked LAU the same way. Overall, it was liked either a lot or quite a bit by 62.5% of the people, behind both the classics and LAU. (Excuse me while I gloat inappropriately.) It was also by far the most liked only “a little” or “not at all” (excuse me while I shamelessly keep gloating inappropriately), though it was played by more people than the other two—which, again, is understandable because it’s newer.

Females and males liked the Survivor games “a lot” or “quite a bit” in pretty much the same percentages; slightly more males liked them in percentage, but the difference is really small. Females are also less “undecided” (as I perhaps inappropriately call those who responded “somewhat”); they were fairly more likely than males to like the Survivor trilogy only a little or to not have played it, but they disliked it more or less the same. The sample is small, but this is only the first piece of evidence suggesting something that I wasn’t expecting to find out: overall, females seem to like older Tomb Raider games more than males, and that applies to versions of Lara as well. I was honestly expecting the opposite.

Age-wise, the Survivor games are less liked as age goes up. All in all, the percentage of people who liked them either a lot or quite a bit doesn’t go down very much with age, but the percentage of those who liked them a lot very much does, only to recover 10-something percentage points in the 45+ cohort. Unsurprisingly, fewer people in this cohort than in others have even played any Survivor games. The size of the cohorts varies quite a bit, so even though we’re talking percentages, I’d still take these results with a grain of salt.

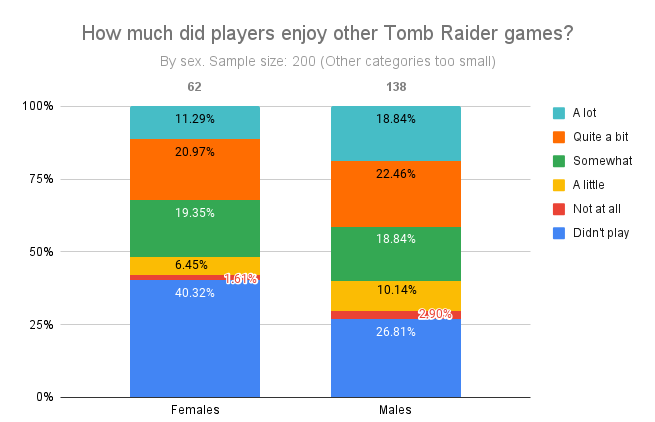

Other Tomb Raider games (everything else!)

This chart looks a lot more fragmented, and predictably, a lot of people haven’t played Tomb Raider games that aren’t exactly part of a main timeline. I asked about these other games only for completeness’ sake; I haven’t played any myself and know little-to-nothing about them.

Females seem less interested in “secondary” Tomb Raider games, if you pass the expression. Other than that, with the exception of the “a lot” and “a little” people, the rest of the percentages are overall quite similar.

Again, the different sample sizes make a comparison difficult, but it’s nonetheless interesting that the younger players are the more likely not to have played other Tomb Raider games (which are fairly recent). I guess only the older, hardcore fans might be interested in trying anything Tomb Raider they can get their hands on? The rest of the chart feels somewhat scattered, with larger percentages of “undecided” people than before.

Lara(s)

Knowing which Lara people like the most is interesting per se, but this set of questions too was meant to help me detect bias—for example, were people who liked CLL more or less likely to say that she was oversexualised? What about people who liked Survivor Lara more? Let’s see.

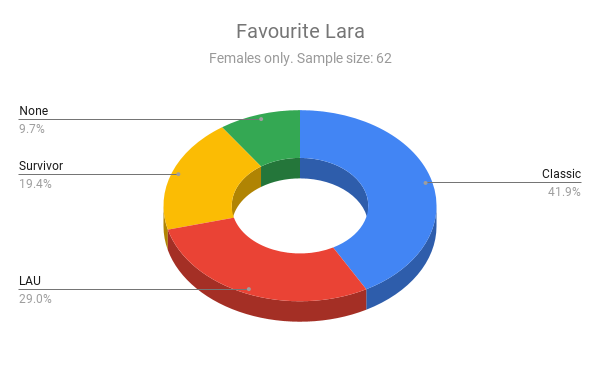

Favourite Laras

Respondents could pick only one version of Lara as their favourite, and the question was all-encompassing: it asked which was their favourite Lara considering everything about her.

Well, Classic Lara wins hands down. LAU Lara almost ties with Survivor Lara, and a fair bit of people had no preference. I thought it would be interesting to see how these percentages would change if I looked at females and males separately, so I did. And I was surprised again.

Female respondents prefer Classic and LAU Lara. Survivor Lara is left quite a bit behind, and Classic Lara is again the winner. Classic Lara is also the males’ favourite, but in their case, Survivor Lara overtakes LAU Lara.

If you look at the percentages, it’s quite obvious that males like Survivor Lara more than females, while females like CLL more than males do. Again, I was expecting the opposite. Don’t get me wrong, ladies—I’m on team CLL too, but I thought the idea was that Survivor Lara was meant to be more relatable for you specifically. If that’s true, it might not have worked that well. As you can see in the chart below, Survivor Lara is the least favourite by female respondents across all age cohorts: only around 20% of each cohort prefers her. LAU Lara is very appreciated by younger females and less by older ones, whereas Classic Lara’s popularity grows with age.

The same chart for males reveals that a larger percentage of each age cohort favours Survivor Lara, even though percentages go down with age; LAU Lara’s popularity seems to overall decrease with age, while classic Lara’s popularity goes up with age.

An obvious explanation for this might be that people who grew up with Lara are likely to be more fond of her previous incarnations, and they are all in their 30s and older. At least for males, Survivor Lara is more popular with the younger ones, possibly because she’s newer and they’re likely to have met her before CLL. For females, though, Survivor Lara just isn’t all that popular, regardless of the respondents’ age.

Who is attracted to which Lara?

This was another interesting one. It was a multiple choice question, because the same person could be attracted to more than one version of Lara. This means that any percentages in the following charts may well add up to more than 100%, and that’s not a mistake.

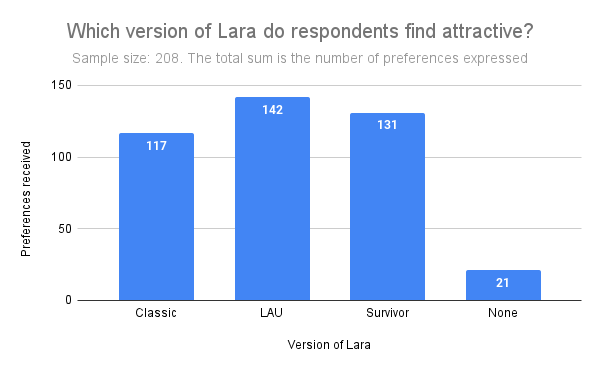

For the longest time after I shared the survey with the world, Survivor Lara was trailing badly behind, but then she recovered quite a bit, overtaking classic Lara by some margin. However, LAU Lara is the clear winner of the attractiveness competition, and only 21 people weren’t attracted to any version of Lara. If we look at at the same chart sex-wise, guess what we’ll find?

That’s right. Albeit by a small margin, females are more likely than males to find CLL attractive, and less likely than males to find Survivor Lara attractive. It’s also interesting that a higher percentage of males than females didn’t find any Lara attractive.

I must say, however, that these results got me confused. The question was:

“Whether or not you think she was oversexualised, did you personally find Lara physically attractive? Tick the box for each version of Lara that you find attractive, if any.”

Maybe I phrased it unclearly, because what I meant was basically: “Tick the box for each version of Lara you’d gladly sleep with.” I wanted to know who was actually aroused by her, again because a) curiosity, and b) to detect bias: for example, are the people who’d sleep with Lara less likely to think she was oversexualised? Of course, the female sample was much smaller than the male sample, and god knows how many bisexuals or lesbians there might have been, so let’s look at these results a bit more in depth.

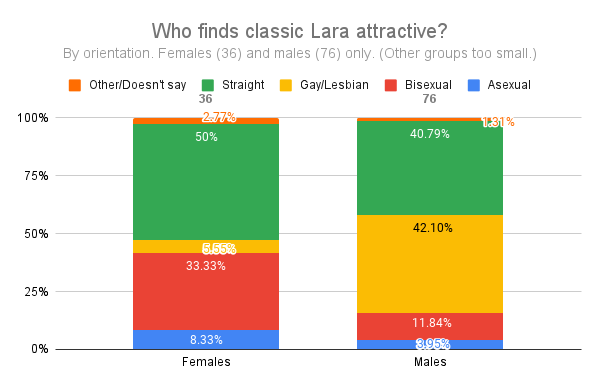

Well then. Among the females who said they found Classic Lara attractive, a whopping 50% are straight. Only 5.55% are lesbian, and while a non-negligible 33.33% are bisexual, I’d like to direct your attention to the column on the right, where it says that forty-freaking-two percent of males who said they personally found Classic Lara physically attractive are gay. Definitely, either my question was really unclear or I must have missed a memo, because last I checked, “straight” meant that you’re only attracted to the sex opposite to yours, and “gay” meant that you’re only attracted to your same sex. Anyways…

Okay, apparently straight females and straight males are attracted in equal percentage to LAU Lara, and a ton of gay males are attracted to her compared to gay females. Once again, bisexual females absolutely crush bisexual males, and even asexual females are more likely to be attracted to her than asexual males (which was even more true for classic Lara, by the way.)

Well, well, well. What do we have here? As usual, bisexual females are obliterating bisexual males, and in percentage, quite a bit more of them are attracted to Survivor Lara than any other Lara. Interestingly, far more straight males than straight females find Survivor Lara attractive, and if you look at the total numbers on top of the columns, you’ll also see that there are quite a bit more males who find Survivor Lara attractive than males who find Classic Lara attractive, while for LAU the number is nearly the same. My sample size may be small, but the big-boobs-to-attract-the-lads theory seems to be slightly faltering.

In case you’re curious, these are the people who didn’t find any Lara attractive.

We can try to look at this issue from a slightly different perspective. Which versions of Lara are attractive to each sex, when categorised by orientation? Let’s start with males.

So, the straight dudes—again, the ones supposed to be attracted to the allegedly oversexualised versions of Lara, which allegedly were oversexualised precisely to attract the straight dudes, are literally the least likely to be attracted to CLL. If it wasn’t for the impressively consistent asexual dudes, they’d be the most likely to be attracted to Survivor Lara. Gay guys are much more into CLL and much less into Survivor Lara (whatever that means), whereas bisexual dudes are almost as consistent as the asexuals. Now, what’s the situation like for the gals?

Straight ladies are really not into Survivor Lara much, unlike pretty much everyone else. I dunno, maybe CLL was “female enemy number one”, but love thy enemy, I guess? LAU Lara is very much attractive according to pretty much every female in the sample regardless of orientation, whereas lesbians don’t seem to be crazy about Classic Lara. Also note how only a small percentage of straight females (and of gay guys, too) said they weren’t attracted to any Lara. I suspect a lot of people interpreted the question as: “Which Lara do you think is generally attractive?”, but I did say “which one do you personally find physically attractive”, didn’t I?

I must confess that one of the reasons I conducted this poll was because I was specifically interested in the perception that straight people have of each version of Lara, especially the older versions. This is not to discriminate against non-straight people, but because during my research I got the strong impression that CLL was especially polarising for straight people—forbidden erotic dream for the men, unbeatable virtual “competitor” for the women. This isn’t exactly my opinion, but something I think many people think. The charts we’ve just seen seem to contradict that, at least for males (I’m still unsure what to make of the fact that straight women said they’re attracted to any Lara at all), but maybe there are other things at play; for example, age.

The chart says quite clearly that younger straight male fans are less attracted to CLL than they are to Survivor Lara, and vice-versa. A possible explanation is that people who are now in their 30s and above grew up with classic or LAU Lara, whom they probably encountered in their teens. Likewise, younger people are likely to have met Survivor Lara in their teens, and these events may well have played a big role in defining what these people find more or less attractive. So, just like the chart says, older straight males are attracted to CLL more, and younger ones are attracted to Survivor Lara more. The same overall trend is still there if we consider which Laras are attractive to males in general, regardless of orientation.

As cohorts age, Survivor Lara goes down, Classic Lara goes up, and LAU Lara goes overall up. (Interestingly, she’s rather popular with men up to 24 years of age. That doesn’t surprise me.) Another possible explanation for these trends is that maybe what is generally considered attractive is slowly changing for whatever reason, moving from the Classic-Lara type to the Survivor-Lara type. People who were teens when Classic Lara was all the rage are now on the right side of that chart, while people who are teens right now are on the left.

If we go back for a moment to favourite Laras and we plot them against the ages of straight dudes, we see once again a similar pattern, so overall, it seems that both in terms of physical attraction and overall appreciation for the character, Survivor Lara is more popular with younger (straight) males and less with older ones, while the converse is true for CLL—especially Classic Lara, whose trend is often more clear than LAU Lara’s.

Now let’s talk about the ladies. Pretending for a moment that there’s nothing odd about the straight ones being attracted to another woman, in certain regards their preferences are opposite to those of straight males.

Younger straight females seem to think Survivor Lara is about as attractive as a sun-dried tomato, but the situation changes quickly with age. CLL is on a slight downward trend, but overall is considered always very attractive, and always at least as attractive as Survivor Lara. If we consider all females instead, the situation is rather different.

Survivor Lara still goes down by age; CLL goes overall up and is often at least as attractive as Survivor Lara, but often the difference is minimal. This is due mostly to bisexual gals who fancy her quite a bit (unlike gay gals, for some reason).

By the way, if Survivor Lara was far from being the favourite of females in general, she practically doesn’t exist for straight females, who basically don’t give a flying rat’s arse about her.

However, the chart is rather fragmented, and that’s probably because the sample is small. A much larger and more balanced sample might well turn these findings on their head.

Oversexualisation of Lara

Here we are going to see what people thought of the idea that CLL was oversexualised. First, let’s look at the demographics.

Demographics

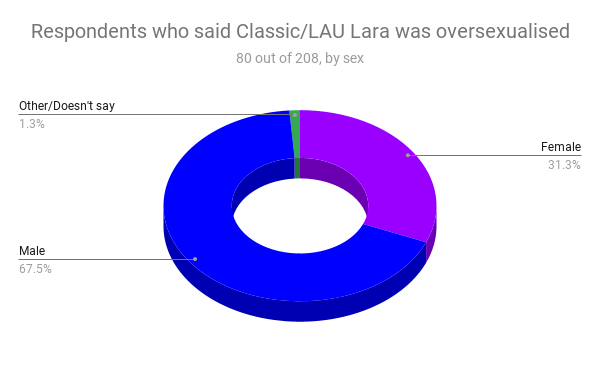

Interestingly, nearly 50% of the respondents said no. Some were undecided, but less than 40% said she was oversexualised, a total of 80 people. Of these, 31.3% were female and 67.5% were male.

That’s not surprising, because the male part of the population was so much larger than the female part; as a matter of fact, males and females answered the question in strikingly similar percentages.

So, at least we know for sure that neither males, nor females are more biased in one way or the other on this matter. Orientation, however, does seem to play a role.

I was expecting the straight females to be harsher on Lara, but they weren’t. Bisexual females were, and I can’t help but notice how they also were those who preferred Survivor Lara more strongly.

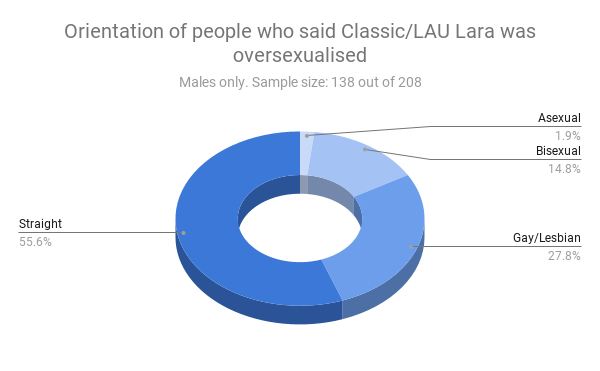

Straight dudes were more likely than other dudes to think CLL was oversexualised, and again, as we’ve seen their sympathies lie mostly with Survivor Lara. Preference for a specific version of Lara appeared to be a strong bias in this sense, as we’ll see soon. Age, instead, doesn’t seem to be very important.

Lara bias

In this section we’re going to see whether which Lara respondents preferred or were attracted to skewed their opinion on the oversexualisation of CLL. Spoiler alert: it obviously did.

A large majority of people whose favourite Lara is Survivor Lara thinks CLL was oversexualised. The same chart for people whose favourite Lara was Classic or LAU Lara is nearly the mirror image of the chart above.

However, in percentage, more CLL fans said “yes” than Survivor Lara fans said “no”, so, if I can be a bit biased myself, I’d say that CLL fans are maybe a little bit less biased than Survivor Lara fans?

Sexual attraction was an even stronger bias. Basically 52% of people who were attracted to Survivor Lara thought CLL was oversexualised, against a mere 36% of people who were attracted to CLL.

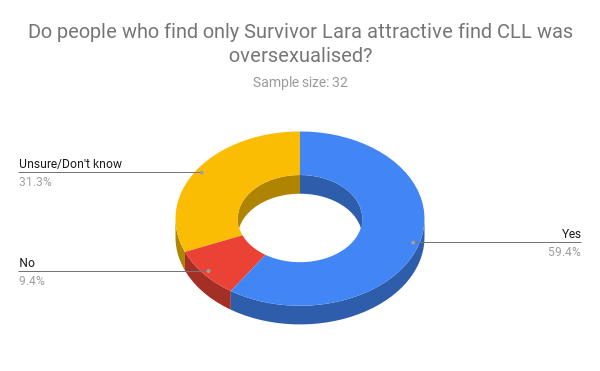

If we look at people who were exclusively attracted to either Survivor or CLL Lara, the difference is even more stark.

At a glance, I’d said that having the hots exclusively for CLL makes you a lot more biased in her favour than having the hots for Survivor Lara only makes you biased against CLL.

Series bias

Did the respondents’ tomb-raiding preferences influence their opinion on the oversexualisation of Lara? They did.

It’s fairly clear that, the more you enjoyed the Survivor trilogy, the more likely you are to think that CLL was oversexualised. To be fair, while the trend is there, it’s less obvious than other trends. For example, it’s absolutely crystal clear that, the more you enjoyed the classic games, the less likely you are to think that CLL was oversexualised.

A very similar correlation links your opinion on the oversexualisation of CLL to your enjoyment of the LAU trilogy.

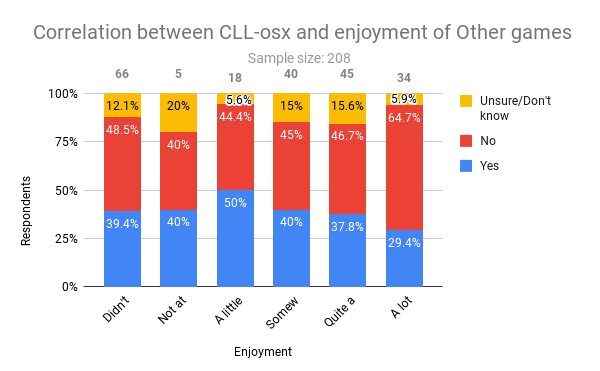

By the way, I’m rather amazed at how people who didn’t play a specific series all tended to say yes, no, or don’t know in similar percentages—though all biased towards the yes. However, enjoyment of other Tomb Raider games didn’t seem to be such a strong bias.

To look at things from a slightly different perspective, this is how much players who said CLL was oversexualised enjoyed different Tomb Raider series.

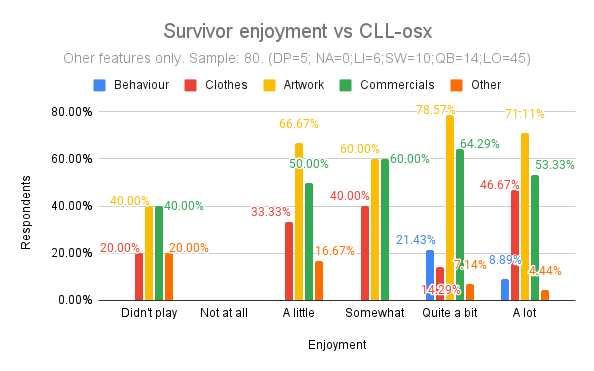

Basically, of the 80 people who said CLL was oversexualised, more than 50% liked Survivor games a lot, and none disliked them. Then again, none disliked much any Tomb Raider series, and after all, among the CLL-osx-positive people (that is, those who said CLL was oversexualised), many enjoyed the classics and LAU as well. Be as it may, it is true that, according to this (small) sample, if you think CLL was oversexualised, you’re more likely to have enjoyed Survivor games more than you enjoyed others.

How was CLL oversexualised?

Respondents who said CLL was oversexualised were asked in what way they thought this was done. If you said she wasn’t oversexualised, you never saw that question. It was a multiple choice question, whose options were:

- Her face was too sensual

- Her breasts were unrealistically large

- Her waist was unrealistically thin

- Her hips were unrealistically wide

- Her behaviour was too suggestive

- Her clothes were too revealing

- Promotional artwork for the games was sometimes inappropriate\suggestive

- Her appearances in unrelated commercials were inappropriate\suggestive

- Other

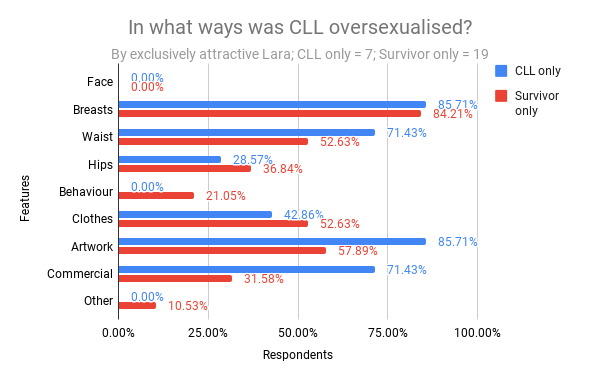

It probably wasn’t a good idea to ask the question this way. For example, a lot of people might easily think that Lara’s breasts were unrealistically large without necessarily thinking that this constituted oversexualisation. I realised this only too late into the game, but anyway, here’s the results, which I also showed in the video.

Honestly, I wish the waist option had had the most votes. After all the measurements I have done, I’m very much convinced that Lara’s boobs were fine. Big? Sure, no doubt about that. Too big, or impossible? Nope. The waist, however, was well beyond the threshold of anorexia for an adult woman. Her hips were as common as they come, and frankly, I’d really like to know in what way her behaviour was ever too suggestive. (I mean in the games; commercials are another story, which is why it’s a separate option.)

The distribution of the answers doesn’t change very much if you categorise them by sex, though some things do stick out.

Pretty much the same percentage of males and females agree that the boobs were too big. Females, however, were quite a bit less likely to say the waist was too thin (seriously, ladies?) or that the hips were too wide, and were more likely to pick all other options. Like I said before, on the assumption that CLL was especially polarising for straight folks, I wanted to know how the answer distribution changed if you categorised them by orientation. The radar chart below shows what options straight females, straight males, and everyone else were more likely to pick.

I think it’s pretty obvious that straight people focussed on Lara’s physical features way more than all the others. Likewise, straight people focussed a lot less on non-physical features than all the others. Straight females in particular were a bit more likely than straight men to have an issue with Lara’s breasts, and a lot less likely than straight males to have an issue with her waist. In fact, they were the least likely. (Again: seriously, ladies?) More straight males pinned down the waist problem than anyone else—though it may well be that if you look into the “Others” group more in detail, someone did better than that. I don’t have the strength to make more charts, sorry.

More interesting patterns emerge if you look at the distribution of answers by which Laras were the respondents’ favourite, or which ones they were attracted to.

Those who prefer Survivor Lara were the most likely to blame the boobs, but thankfully also the waist. At the same time, they were also less likely to focus on non-physical features, unlike those whose sympathies lie with CLL or those who have no preference. It’s interesting how “neutral” parts—that is, non-straight people or people with no preference for a specific Lara—tend to focus on her physicality much less. This pattern doesn’t seem to be as pronounced when you look at the answer distribution categorised by which Lara respondents were attracted to.

With few exceptions, the points tend to be a lot more concentrated, and for once, even those with no preference focussed somewhat more on the physical features. (Then again, their sample size is only 5.) This might be because these people include those who found more than one version of Lara attractive. However, if we focus exclusively on people who found either Survivor Lara or CLL attractive, it’s not like there was a lot of bias, in my opinion.

Agreement on the boobs is the same, while people attracted only to CLL were much more likely than Survivor-only people to blame the waist, the artwork, or commercials. Maybe because they’re more knowledgeable about CLL than the other group of people, who maybe don’t even know about the artwork or the commercials that much? However, the people with the hots for Survivor Lara only were the only ones to say that CLL was also oversexualised in some “other” way. If you too think this is true, feel free to share in the comments below, I’m curious.

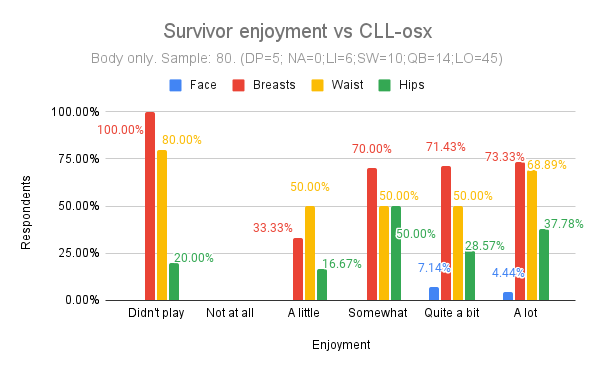

The next eight charts are about the correlation between how much players enjoyed different Tomb Raider series and which features of CLL they thought were oversexualised. They’re meant to show if your game preferences can make you biased against (or in favour of) CLL. Since the features that could be oversexualised are so many, I’ve split them into two groups; the first four charts are about CLL’s body features only, and the last four about all the others.

These charts divide the 80 people who said CLL was oversexualised into six groups of enjoyment levels (from those who didn’t play the games to those who enjoyed them a lot), and then tell what percentage of each group thought a specific feature was oversexualised. So, for example, just above 60% of those who didn’t play the classic games thought that CLL’s waist was unrealistically thin. Again, percentages don’t have to add up to 100% because you could vote for multiple features. Keep in mind that different enjoyment groups have different sizes, so the results aren’t necessarily all equally significant. The sizes are indicated in the subtitle of each chart.

Very few people thought CLL’s face was too sensual—too few to say anything about it. It applies to all charts, so I won’t be discussing that at all. The kind of bias I was expecting was that each feature would get more votes from people that didn’t enjoy the classics very much, and vice-versa. That seems to be true enough for the boobs, whereas the likelihood of thinking that her waist was unrealistically thin is very much constant. The likelihood of thinking that her hips were unrealistically wide seems to go up with enjoyment, which sort of flies in the face of my bias hypothesis.

The numbers of votes that every feature gets seems to drop dramatically with LAU enjoyment:

If you liked LAU “somewhat” or more, it seems you’re going to be a lot more lenient on CLL’s body features, but the catch is that the “not at all” and “a little” groups are very small compared to all the others, so I don’t feel like I can draw any conclusion with a lot of confidence.

Similarly, while there does seem to be a slight increase in the likelihood of voting for CLL’s body features as your enjoyment of Survivor games goes up, I don’t think it’s pronounced enough to say that enjoying Survivor games makes you biased in this sense. Interestingly, it seems that the more you like other Tomb Raider games, the less likely you are to think CLL’s hips were too wide, but you’re also more likely to think her waist was too thin.

At this point I should note that I’m doing this mostly for fun and curiosity, and not to stir controversy within the Tomb Raider community. Even if the data was sufficiently high-quality to draw definitive conclusions about who’s biased in what way (which it isn’t), I don’t think it’d be worth starting a fandom war over it. So please don’t, okay? Let’s move on to the other features.

I don’t think that the “behaviour” and “other” options have been voted for enough to even muse about them, so let’s forget about them.

At a glance, the more you enjoyed the classics, the less likely you are to think that CLL’s clothes were too skimpy. Contrariwise, you’re more likely to blame inappropriate artwork and commercials. The same seems to be true for LAU, Survivor, and other games enjoyment; the only exception is that, the more you liked Survivor games, the more likely you seem to think that CLL’s clothes were too skimpy.

Perception of CLL’s official measurements

Everyone who took my survey was asked how well they thought that CLL’s measurements matched the official measurements (OMs for short) she’s supposed to have, that is 34D-24-35, or 97-61-89 in centimetres. That’s especially tricky for the bra, as you’ll know if you have watched my video.

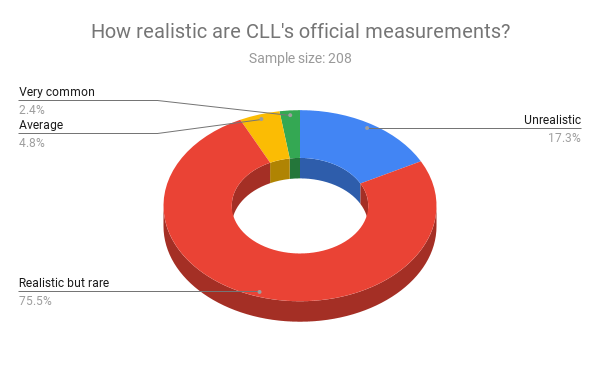

First of all, let’s see how realistic people thought CLL’s official measurements were.

As a side note, very few people complained that they had no idea what the measurement numbers even meant and that I should have had an “I don’t know” option. True, but I was afraid that I’d wind up with a lot of “I don’t know”s, especially from the gents, and that would have made the question useless. I hope those amongst you who didn’t know googled it, but judging from the pie chart above, the respondents very much knew what they were talking about.

CLL’s official measurements are perfectly fine. Her body shape is known as an hourglass figure, which is very much supermodel-like, but realistic in that there are people who have it and didn’t have to undergo surgery or anything crazy like that to get there. Still, CLL’s measurements are rare, especially together: it’s of course a lot easier to find women who match only one or two of those measurements rather than all three. According to this old study I dug out, out of a sample of 6300 USA women, only 12.5% tops had an hourglass figure, and the percentage can be lower depending on the age cohort. Frankly, I expect that the people who answered “average” are those who had no clue what the numbers meant and picked what seemed to be the safest option, while the “very common” people were just trolling.

Anyway, how much did the sex of the respondents influence their answer? Not very.

The vast majority of the ladies agree that CLL’s OMs are realistic but rare; 19.3% think they’re out of this world; 3.2% played it safe or were supermodels who only know other supermodels, and 1.6% were pulling my leg.

In contrast, 76.8% of the gents—a mere percent point more than the gals—thought CLL’s OMs are realistic but rare; only 15.2% thought they’re unrealistic (compare that to the ladies’ 19.3%); 5.8% were daydreaming, and 2.2% had smoked something bad.

Jokes apart, it’s interesting how virtually the same percentage of males and females gave the reasonable answer. Females who didn’t were more likely than the males to think the official measurements are unrealistic, and less likely than the males to think they are average or common. Think of it what you will, I don’t want to say anything that could be used against me by either sex. (Also, I’m not blaming anyone for not giving the “right” answer; you don’t have to know.)

The next interesting question is whether people who thought CLL was oversexualised had a different perception of the OMs than the rest.

The short answer is “not really”. Regardless of what they thought about the oversexualisation of CLL, the vast majority of the respondents thought the official measurements are realistic but rare. The unsure people were somewhat more likely to think the OMs were unrealistic, and together with the “not oversexualised” gang, they were the only ones to think that the OMs could be very common. It’s interesting how the “oversexualised” people are the most balanced: they’re the least likely to think the OMs are unrealistic (although not by much), and also the least likely to think they’re average or very common.

You have already seen the chart below in my video, but here’s how people thought CLL’s average measurements compared to the OMs.

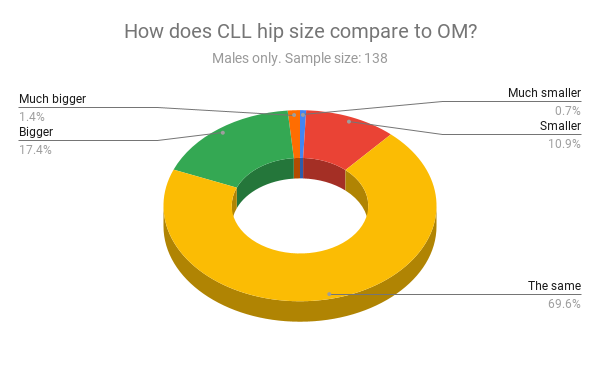

In general, for all three measurements, a majority thought the OMs matched with CLL’s average measurements, which was true only in the case of the hips. For some reason, the overwhelming majority of respondents didn’t fail to notice this: nearly 65% of the respondents said CLL’s hips were the same as stated in the OMs.

Thank god, no one said CLL’s waist was much bigger than the OMs, but still, far too many people thought it was the same or bigger, and only very few realised just how ridiculously tiny it actually was. I guess “smaller” and “much smaller” are rather subjective terms, but anyway I’m firmly in the “much smaller” camp. Let me remind you that, according to my measurements, CLL’s waist was on average twelve centimetres (almost 8 inches) smaller than the OMs. It was only about 80% percent of what it should have been, that is some 20% smaller.

A lot fewer people thought the boobs were on target—which they weren’t—and a lot more thought they were bigger or much bigger, which they weren’t either. According to my measurements, they were smaller, but not much.

Measuring the size of waists and hips can be reduced down to basically a one-dimensional problem, but that’s harder to do with breasts or bras, which is why in reality I “blame” respondents much less than I did in the video (where I was joking, by the way). In some games, Lara’s boobs do look huge, for example in Tomb Raider 4 and 5. They were bigger than they were in Tomb Raider 2, but nowhere near as much as they looked because of the shading and visible cleavage. Also, the stupidly tiny waist (and shoulders, in the case of Tomb Raider 4-5) really doesn’t help getting a good sense of the breasts’ actual size. In fact, virtually all sources I found complain about the boobs being impossibly huge, but few even say anything about the waist, and those who do fail to realise that it’s mainly because of that that the boobs look so big. (Only one source realised this.) Let me put it this way: Lara’s breasts were too big compared to her waist, but not in general. Instead, her waist was just too tiny for an adult woman, period.

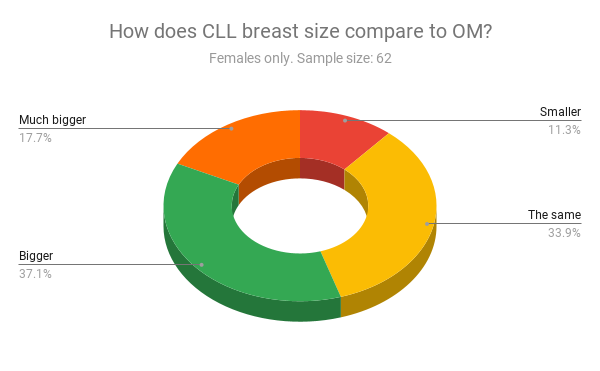

That said, did the sex of the respondents influence how well they thought CLL’s measurements matched the OMs? Oh, yes. This is what female respondents thought of Lara’s boobs.

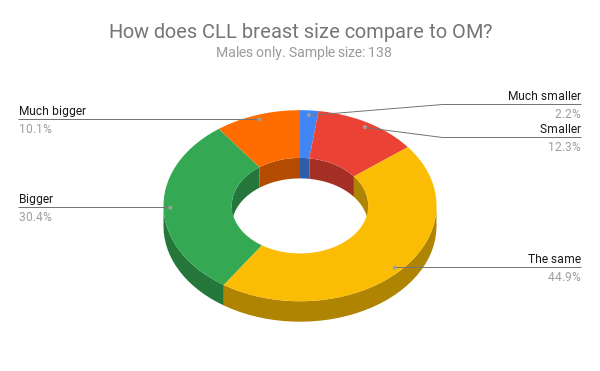

This is what the males thought.

Females were consistently more likely than males to overestimate CLL’s breast size compared to the OMs; males were more likely to say they were the same or smaller. Keeping in mind the discussion we’ve just had and the fact that females and males of all sexual orientations are included in the charts, think of it what you will. I’m not gonna touch that with a ten-foot bra.

Interestingly, and perhaps unsurprisingly, the size of CLL’s waist was a lot less polarising than the boobs. You can hardly tell the difference between the female chart,

and the male chart.

Sex seems correlate with what the respondents thought of the hips, but in a confusing way. Way more males than females thought that CLL’s hips were the same as the OMs, and females were more likely to say that they were (much) bigger instead, but they were also more likely to say they were smaller…

Finally, I was curious to see how the perception of the OMs influenced the perception of how well CLL’s measurements matched the OMs, if at all. Basically, I wanted to see if there were people who thought stupid things like “Lara’s boobs were unrealistically big, and they were smaller than the official size, which is very common.” Believe it or not, there were people like that. Wat.

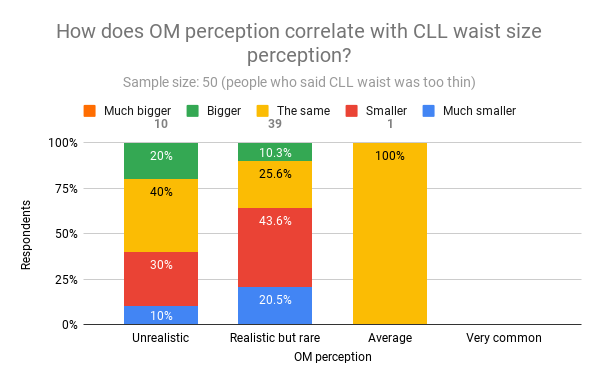

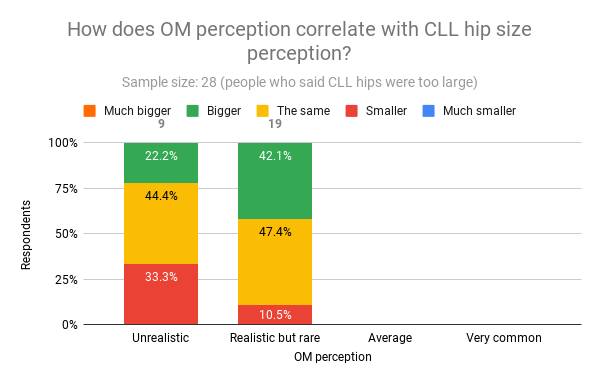

In this case, the samples are people who said that a certain body measurement of CLL was too big or small. No one them said that the OMs are very common, so that column is always empty. (In one case, the “average” column is empty too.) I’m not going to go into the details of each chart, because this is already far too long, but by inspecting these charts you can get an idea of how realistic/unrealistic people thought that CLL’s measurements or the OMs were. For example, in the chart below, everyone thought CLL’s breasts were unrealistically big. Of these, 11 people said the OMs were unrealistic. Of these 11 people, about 18% thought CLL’s breasts were much bigger than the OMs, which suggests they think her boobs were very unrealistic.

Note how, of the 45 people who said the OMs are realistic but rare, 28.9% said CLL’s boobs were the same size as the OMs. This doesn’t make a lot of sense when you think that all these people also said that CLL’s boobs were unrealistically big. Below are the charts for waist and hips, and you can draw your own conclusions. (If you’re even still reading, that is.)

Was Survivor Lara oversexualised?

This is mostly for shit and giggles. My deliberately very caustic and sarcastic impression is that there isn’t anything to be sexualised—let alone oversexualised—to begin with; but even if there was, the little I’ve seen of the Survivor trilogy doesn’t seem oversexualised at all to me. Yet, some people disagree. Not many, though.

That’s 13 people. To be more precise,

In percentage, more females than males said “yes”; more females than males were unsure, and fewer females than males said “no”.

This is how, according to these 13 people, Survivor Lara was oversexualised.

Now, in the spirit of scientific enquiry, I took Survivor Lara’s measurements as well, specifically from her ROTTR model. There are no official measurements to compare to, but according to my research, she’s supposed to be 168 cm (5ft 6) tall. The measurements I got for her are 30DD-26-37 (or 32C-26-37, with the +4 rule.) Her over bust is around 34 inches (87 cm) and her under bust is around 29 inches (74 cm). The volume of both her cups should be 960cc, which is the same as Classic Lara’s cup volume from Tomb Raider 2 to Angel of Darkness. Her waist is 2 inches larger than CLL’s OMs and so are her hips. In centimetres, her waist is 67 and her hips are 95. She too has an hourglass-ish figure, but frankly, I don’t think there was anything wrong or oversexualised with her measurements.

As for her face being too sensual, well, let’s just say different people have different definitions of sensual and let’s leave it at that, eh? I didn’t even get halfway through Tomb Raider 2013 before deciding it really wasn’t for me, but I didn’t see any suggestive behaviour or revealing clothes. I wouldn’t know about inappropriate artwork or commercials, so if you do, please do share, I’m curious. Also, what the heck is that “other” that 9 people voted for?

If I had to guess, I’d say it’s the rape/non-rape controversy. There was a scene early on during Tomb Raider 2013 where apparently it was kind of unclear whether a guy was or wasn’t trying to rape Lara. I read that a lot of people were pissed about that. Given the circumstances of that game, I wouldn’t be surprised at all if there was a guy who was trying to rape her. That’s not oversexualisation, that’s crude realism, which Tomb Raider 2013 was obviously trying to go for. (Well, except for the ghosty-monstery bits.) Had the game shown a full-blown rape, that would be one thing, but it didn’t. I really don’t understand why people lose their shit over a scene that suggests that a guy on an island full of literal murderers (Lara included, by the way) might be also a rapist, especially when other things—such as Lara being impaled through her goddamn throat and dying horribly—are not simply subtly implied; they’re very much graphical and in-your-face. Sense of proportions, people: which is a worse sight? A person successfully escaping an implied rape attempt, or the same person getting a pointy wood pole through her neck and dying? I am not sure what to make of the fact that, on some level, people seem to find rape worse and/or less acceptable than brutal murder/death, but I am slightly concerned. They’re both horrible things, but if you had to pick one to happen to you, I doubt you’d go with murder or death.

Look at me, almost defending a game I hated the guts of. It’s true: anything can happen.

Anyway, if that’s not the “other” that the voters were talking about, please let me know in the comments below. The final chart shows how sex influenced the voting of supposedly oversexualised features of Survivor Lara.

There was one gal who obviously hated everything about her (hard not to sympathise), but anyway, it was mostly females who voted for unrealistic body features, whereas males constituted the majority of the “other” gang.

Wrapping up

This is probably the last Tomb Raider-related thing I’m going to write for a while. It’s been a long journey, and frankly, I’m happy to know that, from now on, I’ll only be spending time with Lara when I’m (re)playing a Tomb Raider game.

I hope you found the chronicles (pun intended) of my journey interesting, and I’d like to remind you once more that sample size matters. This sample was small, and its various subsamples were even smaller and often hard to compare to each other. Also, I didn’t really run any fancy analyses on the data—I simply put them together in a way that made sense, made sure that nothing obviously wrong was going on, and plotted a few charts.

See you around, and happy raiding—whatever raiding blend tickles your fancy.